Why Signature-Based Detection Falls Short in Zero Trust

For decades, security teams relied on signature-based detection: known-bad indicators matched against traffic and log data. This approach fails in environments where adversaries use legitimate credentials, operate within authorized network segments, and mimic normal user behavior. Zero Trust assumes breach, which means the threat is already inside. Behavior-based anomaly detection shifts the focus from identifying known malicious patterns to detecting deviations from established baselines of normal activity.

In a Zero Trust architecture, every user, device, and workload has an expected behavioral profile. When an entity begins acting outside that profile, the anomaly detection system flags the deviation, scores its severity, and feeds the result into the policy decision point. This approach catches compromised accounts, insider threats, and novel attack techniques that have no existing signature.

Building Behavioral Baselines

Effective anomaly detection begins with establishing what “normal” looks like. Baselines must be constructed at multiple granularity levels: per-user, per-role, per-device, and per-workload. A baseline that is too broad produces excessive false positives. A baseline that is too narrow fails to detect sophisticated deviations.

User Behavior Baselines

User behavior analytics (UBA) systems ingest authentication logs, application access logs, file interaction events, and network flow data to construct a profile for each identity. Key dimensions include typical login times, geographic locations, frequency and pattern of resource access, data volume transferred, and the specific set of applications and services used during a standard work cycle.

For example, a database administrator who typically queries production databases between 09:00 and 18:00 UTC from a single corporate subnet has a well-defined baseline. If that same account begins executing bulk data exports at 03:00 UTC from an IP address associated with a commercial VPN provider, the deviation is measurable and significant.

Workload Behavior Baselines

In microservices architectures, each service has a predictable communication pattern. Service A calls Service B and Service C during normal operation, using specific HTTP methods on specific endpoints, at a predictable rate. When Service A suddenly begins calling Service D (a sensitive internal service it has never contacted before) at 10 times its normal request rate, the anomaly detection system should flag this as a potential lateral movement indicator.

- Collect at least 30 days of telemetry before activating enforcement based on anomaly scores to ensure baselines are statistically significant.

- Segment baselines by day-of-week and time-of-day to account for cyclical patterns such as end-of-month reporting or weekly deployment windows.

- Incorporate role-based grouping so that when a user changes teams, their baseline can be seeded from the new role’s aggregate profile rather than starting from scratch.

- Account for seasonal variations and planned events such as security audits, penetration tests, and infrastructure migrations that may temporarily shift normal patterns.

Detection Techniques and Algorithms

Anomaly detection in a Zero Trust context typically employs a combination of statistical methods, machine learning models, and rule-based heuristics. No single technique is sufficient on its own. A layered approach reduces false positives while maintaining detection coverage.

Statistical Methods

Z-score analysis and interquartile range (IQR) methods detect outliers in numerical dimensions such as request frequency, data transfer volume, and session duration. These methods are computationally inexpensive and work well for metrics with approximately Gaussian distributions. For metrics with heavy-tailed distributions, such as API call rates, Median Absolute Deviation (MAD) provides more robust outlier detection.

Machine Learning Models

Unsupervised learning models such as Isolation Forest, Local Outlier Factor (LOF), and autoencoders are well-suited for detecting anomalies without requiring labeled attack data. Isolation Forest works by recursively partitioning the feature space; anomalous points are isolated in fewer partitions than normal points, making them computationally cheaper to isolate. Autoencoders learn a compressed representation of normal behavior and flag inputs that produce high reconstruction error as anomalies.

In production deployments, Isolation Forest models are commonly retrained on a weekly cadence using a sliding window of the most recent 90 days of data. The model is evaluated against a held-out validation set that includes both confirmed anomalies and known-normal activity to track precision and recall metrics over time.

Real-World Detection Scenarios

Consider a real-world scenario involving a compromised service account in a Kubernetes cluster. The service account normally issues GET requests to the Kubernetes API server to list pods in its namespace, approximately 12 times per hour. An attacker who has obtained the service account token begins using it to list secrets across all namespaces, create new role bindings, and patch deployment configurations. The behavioral anomaly detection system detects the following deviations simultaneously.

- API verb distribution shift: the account’s historical profile shows 98% GET requests. The current session shows 40% PATCH and 20% CREATE operations.

- Namespace scope expansion: the account has never accessed resources outside its designated namespace. It is now querying the kube-system namespace.

- Request rate increase: the current request rate is 8 standard deviations above the historical mean for this account.

- Resource type anomaly: the account has no historical access to Secret or ClusterRoleBinding resources.

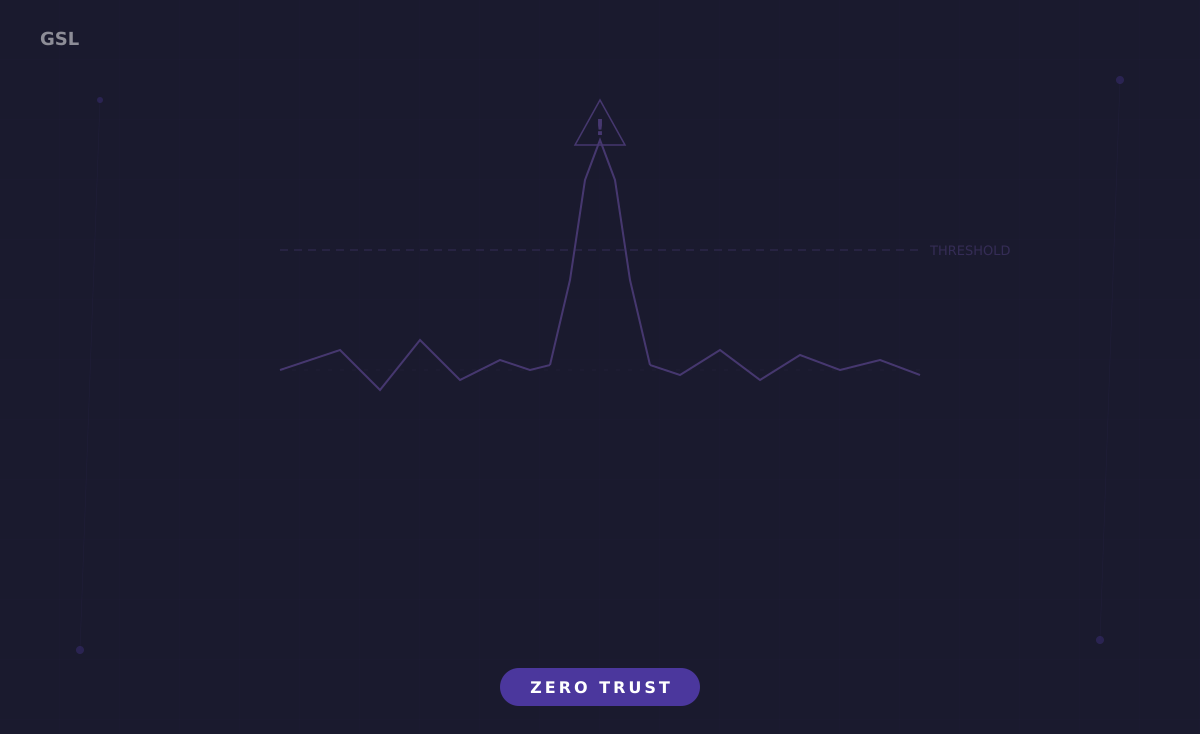

Each of these signals individually might generate a moderate anomaly score. In combination, they produce a composite score that exceeds the critical threshold, triggering an automated response that revokes the service account token and quarantines the pod.

Tuning and Operationalizing Anomaly Detection

The most significant operational challenge with behavior-based anomaly detection is managing false positives. Security teams that are overwhelmed by low-fidelity alerts will eventually ignore the system entirely, negating its value. Tuning requires a disciplined feedback loop between the detection system and the analysts who triage its output.

Effective tuning practices include tiered alerting where only composite scores above a high threshold generate actionable alerts while moderate scores are logged for retrospective analysis. Contextual enrichment at alert time, such as correlating the anomaly with concurrent threat intelligence feeds or change management records, helps analysts quickly determine whether the deviation is malicious or benign. Automated suppression rules for known-benign patterns, such as backup service accounts that spike in activity during scheduled maintenance windows, reduce noise without reducing coverage.

Organizations should track their anomaly detection system’s performance using precision (what percentage of alerts were true positives) and mean-time-to-triage (how long it takes an analyst to assess an alert). A well-tuned system in a mature Zero Trust deployment should achieve precision above 70% and mean-time-to-triage below 15 minutes for critical alerts.

Integration with the Zero Trust Policy Engine

Anomaly detection is most powerful when its output feeds directly into the Zero Trust policy engine rather than existing as an isolated alerting system. When the anomaly detection system determines that a user’s behavior has deviated significantly, the policy engine can automatically reduce the user’s access scope, require re-authentication, or route their traffic through additional inspection layers such as a cloud access security broker (CASB) or data loss prevention (DLP) engine.

This integration transforms anomaly detection from a passive monitoring capability into an active defense mechanism. The detection system does not just tell you something is wrong; it triggers an automated response that contains the potential impact while human analysts investigate. In a mature Zero Trust deployment, this closed-loop architecture is what makes the difference between detecting a breach after exfiltration and containing it before data leaves the network.