Why Microsegmentation Is the Backbone of Zero Trust Networking

Traditional network architectures rely on a hardened perimeter with a flat, trusted interior. Once an attacker breaches the outer firewall, lateral movement across subnets, VLANs, and application tiers is largely unimpeded. Microsegmentation inverts this model by enforcing granular access controls between every workload, regardless of whether those workloads sit on the same physical host or span multiple data centers.

In a microsegmented network, each application component operates within its own security boundary. A compromised web server cannot communicate with the database tier unless an explicit policy permits it, and even then the allowed traffic is restricted to specific ports, protocols, and authenticated identities. This article walks through the architectural decisions, tooling choices, and operational patterns required to design microsegmented networks that hold up under real production workloads.

Defining Segmentation Boundaries

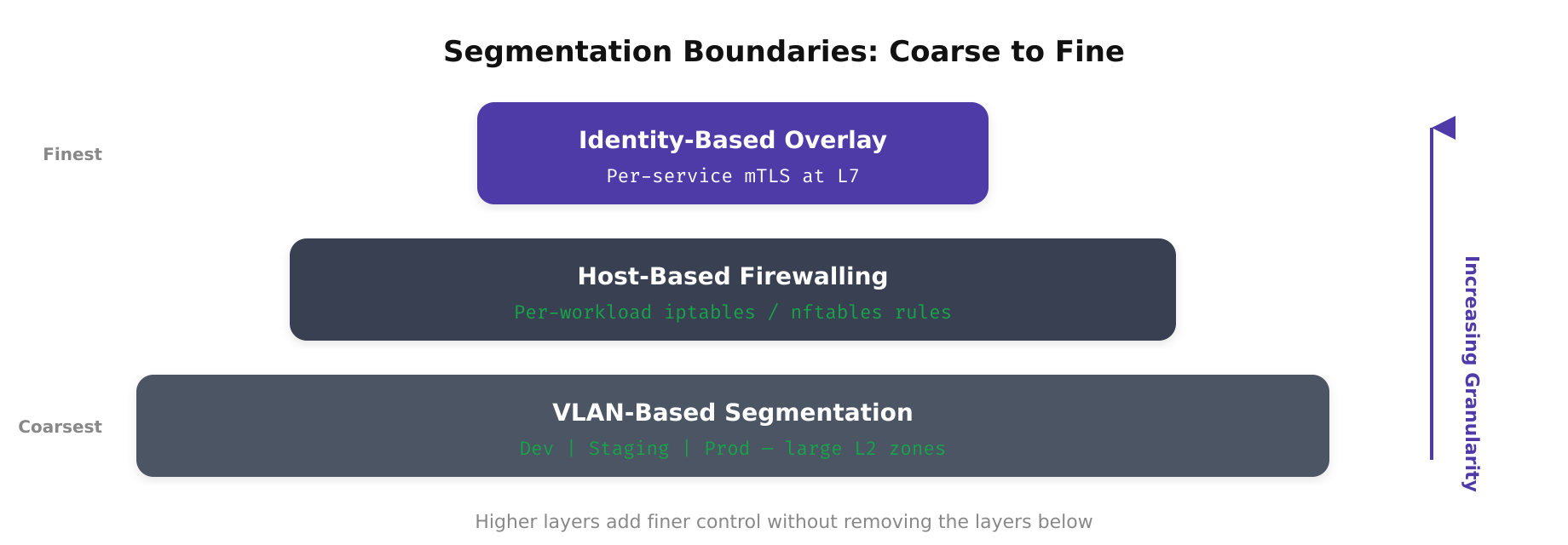

The first design decision is choosing what constitutes a segment. There are three common approaches, each with trade-offs in complexity, visibility, and enforcement fidelity.

- VLAN-based segmentation: Uses Layer 2 broadcast domains to separate traffic. This is the simplest to implement in brownfield environments but offers coarse granularity. You can isolate development from production, but segmenting individual services within production requires additional VLANs and quickly leads to VLAN sprawl.

- Host-based firewalling: Enforces rules directly on each endpoint using iptables, nftables, Windows Firewall, or a commercial agent. This provides workload-level granularity and does not depend on the underlying network topology. However, policy management becomes complex at scale without centralized orchestration.

- Identity-based overlay segmentation: Uses a combination of service mesh, SPIFFE/SPIRE identities, and sidecar proxies to enforce mutual TLS (mTLS) between services. This approach is topology-independent and integrates naturally with Kubernetes and container orchestration platforms.

In practice, most production environments combine all three. VLANs provide coarse isolation between environments, host-based rules restrict traffic at the OS level, and identity-based controls authenticate and authorize service-to-service communication at Layer 7.

Free to use, share it in your presentations, blogs, or learning materials.

This diagram highlights the three primary segmentation boundary types used in microsegmented architectures. Network zones carve the environment into broad trust regions, host-based boundaries tighten control at the operating system level on each endpoint, and application-layer segments leverage service identities to govern communication between individual microservices regardless of their physical location.

Building the Policy Model

A microsegmentation policy model must answer four questions for every traffic flow: who is the source, who is the destination, what protocol and port are used, and is this flow necessary for the application to function correctly? The process of discovering and codifying these answers is called application dependency mapping.

Application Dependency Mapping

Before writing a single firewall rule, you need a complete picture of your application’s communication patterns. There are two complementary methods for building this map.

Passive traffic analysis uses flow logs (VPC Flow Logs in AWS, NSG Flow Logs in Azure, or NetFlow/IPFIX from physical switches) to record every observed connection. Over a two-to-four-week observation window, you build a baseline of legitimate traffic. Tools like Illumio, Guardicore (now part of Akamai), or open-source solutions like Cilium Hubble can visualize these flows and suggest policy rules.

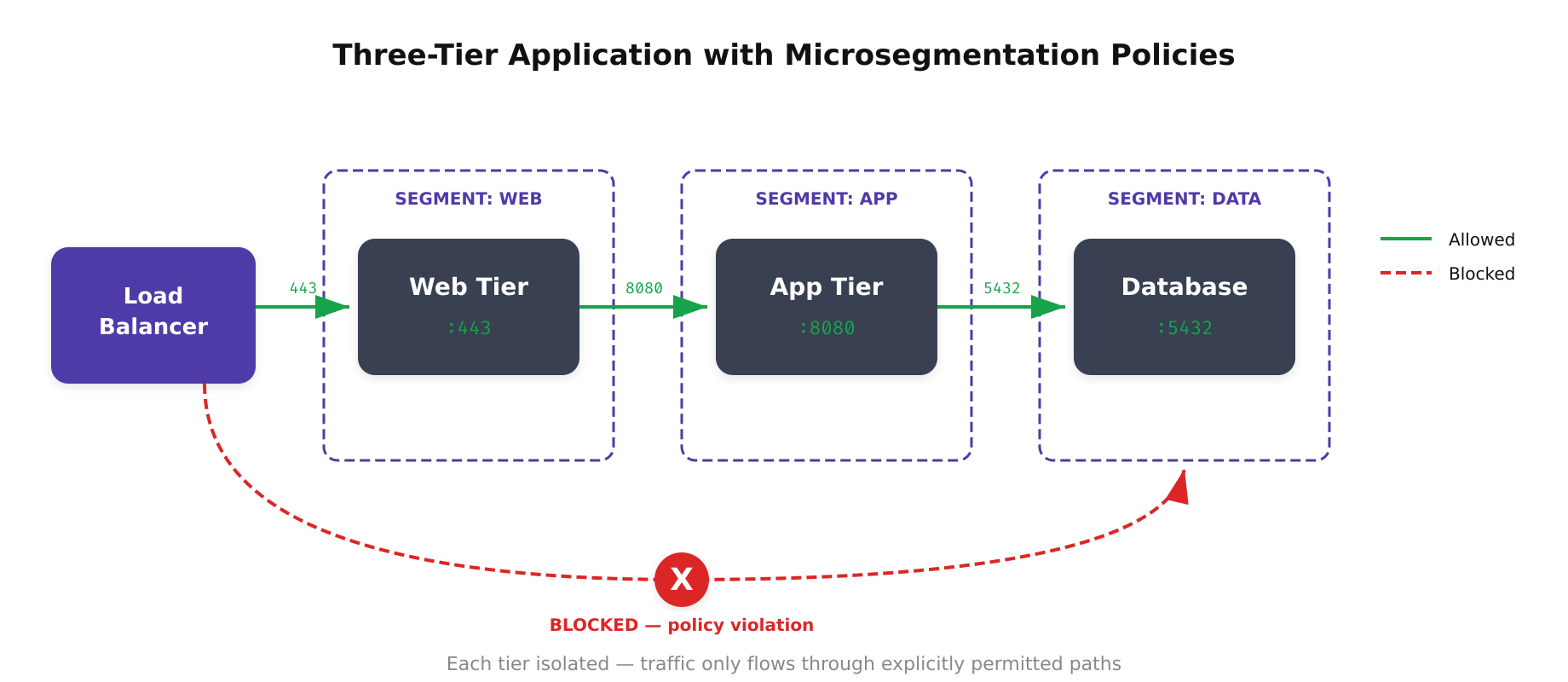

Active application profiling involves working with development teams to document expected communication paths. A three-tier web application, for example, should have the following flows: load balancer to web tier on port 443, web tier to application tier on port 8080, and application tier to database on port 5432. Any traffic outside these paths is unauthorized.

Policy-as-Code Implementation

Once dependencies are mapped, encode them as declarative policy. In Kubernetes environments, this means writing NetworkPolicy resources or Cilium CiliumNetworkPolicy CRDs. For VM-based workloads, tools like HashiCorp Consul, Calico Enterprise, or VMware NSX allow you to define policies using labels and tags rather than IP addresses.

A Kubernetes NetworkPolicy for a PostgreSQL pod that should only accept connections from the application tier looks like this conceptually: the ingress rule selects pods labeled role=app-tier and allows TCP on port 5432. All other ingress is denied by default. This policy travels with the workload regardless of which node it runs on, making it inherently portable across clusters.

Free to use, share it in your presentations, blogs, or learning materials.

The above illustration demonstrates how microsegmentation enforces strict traffic flow between application tiers. The load balancer can only reach the web tier on port 443, the web tier communicates with the app tier on port 8080, and only the app tier can access the database on port 5432. Any attempt to bypass tiers, such as a direct connection from the load balancer to the database, is blocked by policy.

Enforcement Architecture Patterns

Where you enforce microsegmentation policies has significant implications for performance, reliability, and operational complexity.

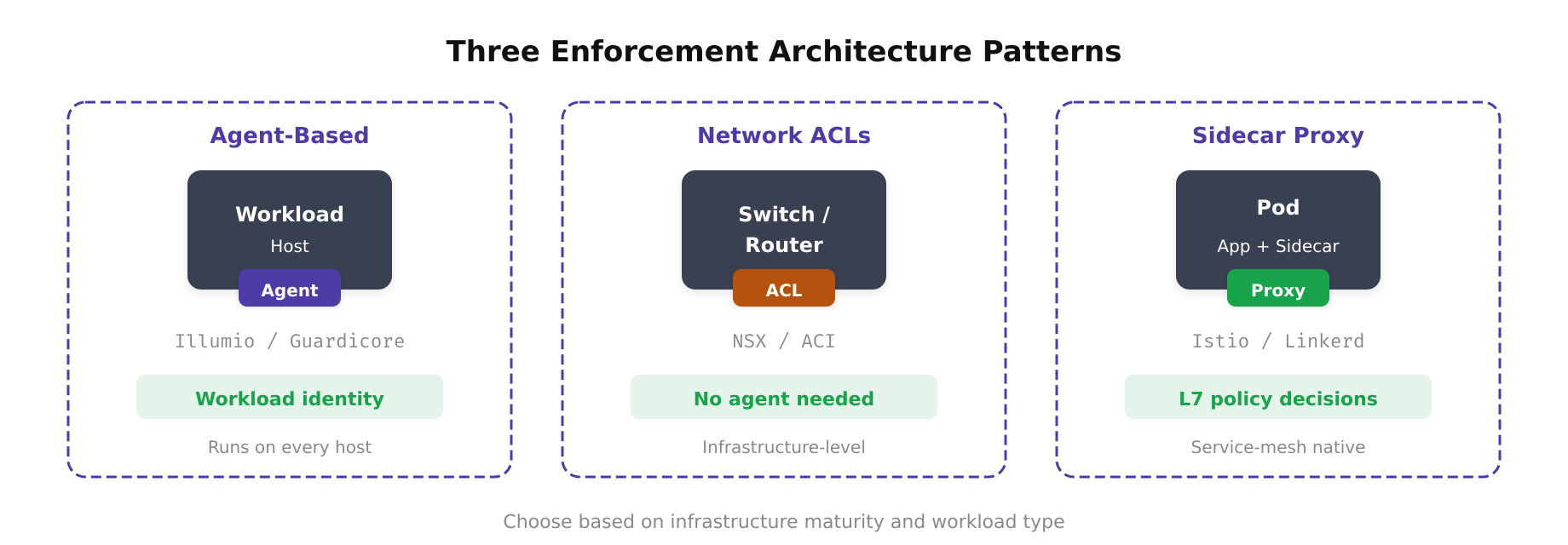

- Distributed enforcement (agent-based): Each workload runs an agent that programs local firewall rules. This approach scales linearly because enforcement is distributed, but requires agent deployment and management on every host. If the agent fails or is tampered with, the workload may lose protection.

- Network-level enforcement (switch/router ACLs): Policies are pushed to network devices. This provides enforcement without modifying endpoints but requires programmable infrastructure (SDN controllers, modern switch firmware) and cannot easily enforce Layer 7 policies.

- Sidecar proxy enforcement: A proxy (Envoy, Linkerd-proxy) runs alongside each workload and handles mTLS termination, authentication, and authorization. This is the default model in service mesh architectures and provides the richest policy vocabulary (HTTP path, method, headers) but adds latency and resource overhead.

For hybrid environments spanning on-premises and cloud, a layered approach works best. Use network ACLs for coarse inter-zone segmentation, host-based agents for workload isolation, and sidecar proxies for service-to-service authentication where application-layer visibility is required.

Free to use, share it in your presentations, blogs, or learning materials.

As shown above, the three enforcement patterns operate at different layers of the stack. Agent-based enforcement programs firewall rules directly on each host, network-based enforcement relies on programmable switches and SDN controllers, and sidecar proxy enforcement intercepts traffic at the application layer to provide identity-aware, Layer 7 policy decisions. Organizations typically combine these patterns to achieve defense in depth across their hybrid infrastructure.

Operational Considerations and Failure Modes

Microsegmentation introduces operational complexity that must be managed proactively. The most common failure modes include policy misconfiguration that blocks legitimate traffic, agent failures that leave workloads either fully open or fully isolated, and policy drift as applications evolve without corresponding policy updates.

To mitigate these risks, implement the following operational practices:

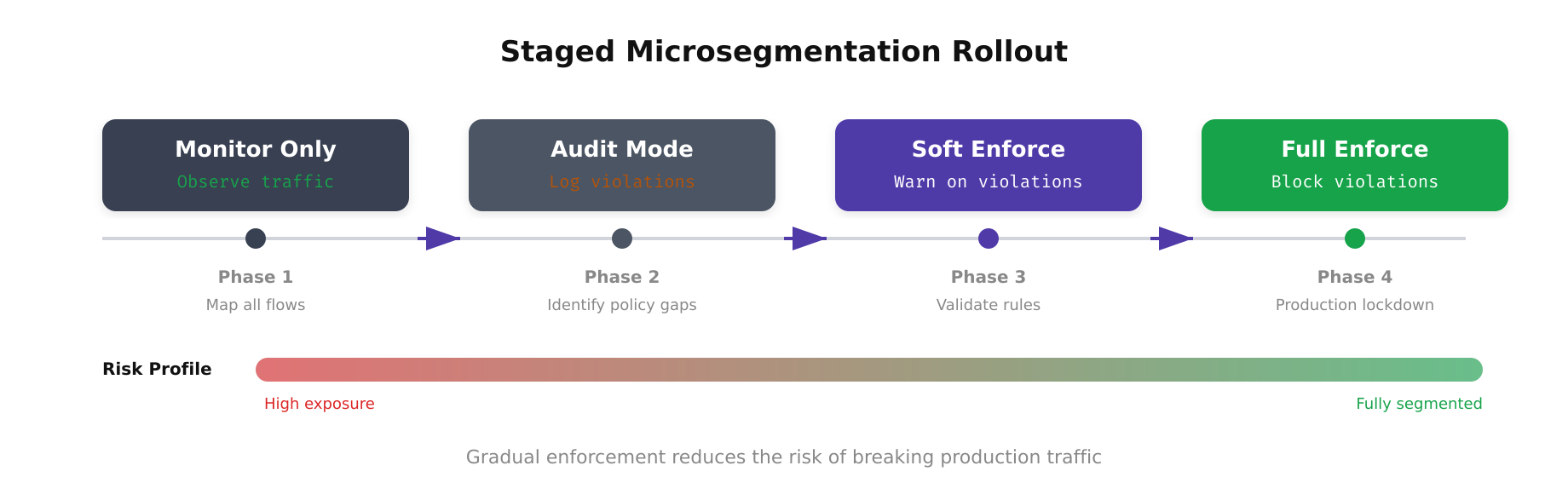

- Staged rollout: Deploy policies in audit/monitor mode first. Log all traffic that would have been blocked without actually blocking it. Review the logs for false positives before switching to enforcement mode.

- Canary enforcement: Enable enforcement on a small subset of workloads (10-15%) before rolling out to the full fleet. Monitor error rates, latency, and connectivity metrics closely during this phase.

- Policy CI/CD: Treat segmentation policies like application code. Store them in version control, run automated validation (syntax checks, conflict detection, reachability analysis), and deploy through a pipeline with approval gates.

- Break-glass procedures: Define a well-documented process for rapidly disabling enforcement in an emergency. This might involve a single command that switches all policies to audit mode or a pre-defined “allow all” policy that can be activated within seconds.

The above illustration depicts the staged rollout timeline for microsegmentation deployment. Starting with a discovery phase to map application dependencies, the process moves through audit mode where policies are tested without blocking traffic, then to canary enforcement on a small percentage of workloads, and finally to full enforcement across the fleet. Each stage includes validation checkpoints and rollback procedures to minimize the risk of service disruption.

Measuring Segmentation Effectiveness

Deploying microsegmentation is not the end state; you must continuously validate that it works as intended. Key metrics to track include the percentage of workloads with active enforcement (your segmentation coverage), the number of policy violations detected per week (indicating either misconfigurations or actual intrusion attempts), mean time to update a policy when a new service is deployed, and the blast radius of a simulated compromise.

Red team exercises are particularly valuable. Have your offensive security team compromise a workload and measure how far they can move laterally. In a well-segmented network, a compromised web server should be unable to reach the database directly, unable to scan adjacent subnets, and unable to exfiltrate data through unauthorized egress paths. If the red team can pivot beyond the compromised segment, your policies need tightening.

Microsegmentation is not a product you install but a discipline you practice. It requires sustained investment in dependency mapping, policy management, and continuous validation. When done correctly, it transforms your network from a flat attack surface into a series of isolated compartments, each of which must be individually breached. This is the fundamental promise of Zero Trust networking, and microsegmentation is how you deliver on it.